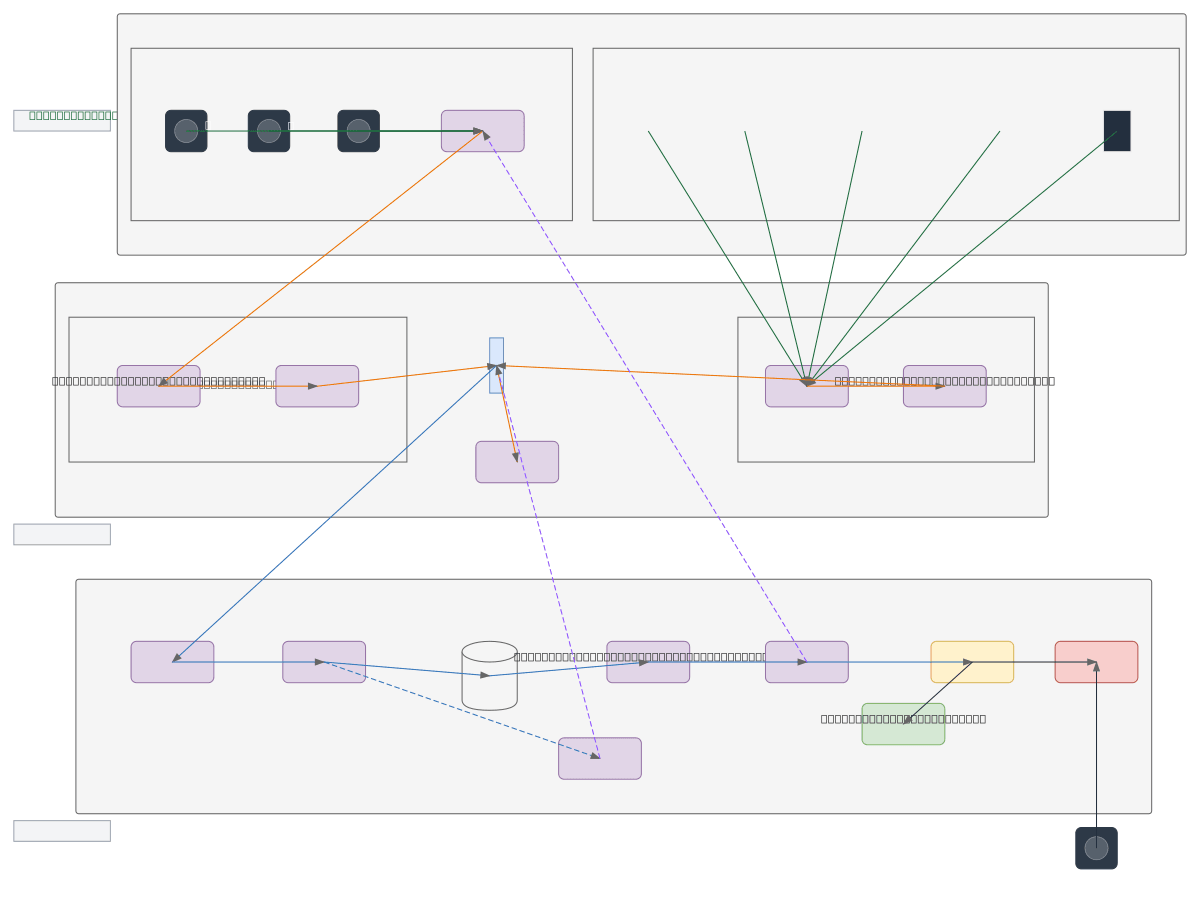

Smart Traffic Analysis System Architecture

About This Architecture

Smart Traffic Analysis System integrates multi-sensor perception (traffic cameras, IR sensors, ultrasonic sensors) with edge-based YOLOv8 deep learning inference and cloud analytics for autonomous traffic signal control. Data flows from distributed sensor inputs through an Arduino Nano microcontroller running vehicle detection logic, then to an IoT Gateway for cloud ingestion, stream processing, and time-series storage. This architecture enables real-time vehicle counting and classification at the edge while supporting centralized ML model training and dashboard monitoring via WAF-protected CDN delivery. Deploy this pattern to reduce latency-critical decisions, optimize traffic flow, and scale analytics across multiple intersections. Fork and customize sensor configurations, retrain YOLOv8 models, or integrate alternative microcontrollers and cloud backends on Diagrams.so.

People also ask

How do you build a real-time traffic management system that combines edge AI inference with cloud analytics?

This Smart Traffic Analysis System uses YOLOv8 on edge devices for low-latency vehicle detection, Arduino Nano microcontrollers for signal decisions, and cloud layers for centralized ML training and monitoring. Multi-sensor fusion (cameras, IR, ultrasonic) feeds both edge inference and cloud stream processing, enabling scalable, responsive traffic optimization.

- Domain:

- Ml Pipeline

- Audience:

- IoT and edge computing architects designing real-time traffic management systems

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.