Single Neuron Model - Neural Network

About This Architecture

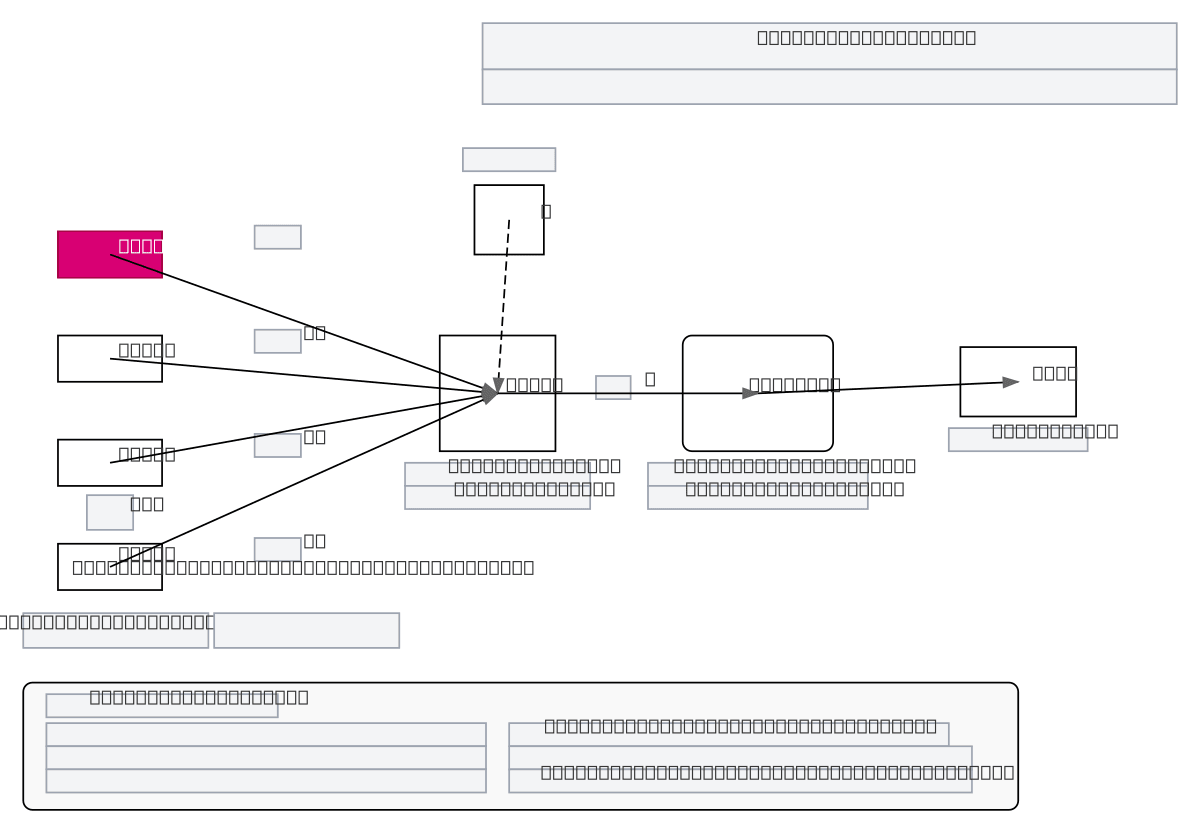

Single neuron model diagram illustrating the fundamental building block of neural networks with input vector X(t), weight matrix Wx, bias term b, and sigmoid activation function. Data flows from multiple inputs x1(t) through xn(t) into a summation node that computes z = Wx*X(t) + b, then passes through the activation function sigma(z) to produce output y(t). This architecture demonstrates the core mathematical operations—weighted sum, bias addition, and nonlinear activation—essential for understanding how neurons learn and transform data. Fork this diagram to customize activation functions, add batch normalization, or extend to multi-layer networks for your documentation or educational materials.

People also ask

How does a single neuron in a neural network compute its output from inputs, weights, and bias?

A single neuron takes input vector X(t), multiplies it by weight matrix Wx, adds bias term b to compute pre-activation z = Wx*X(t) + b, then applies an activation function sigma(z) such as sigmoid to produce output y(t). This diagram shows the complete forward pass: inputs flow into a summation node, bias is added, and the result passes through a nonlinear activation function to enable the neuron

- Domain:

- Ml Pipeline

- Audience:

- Machine learning engineers and deep learning practitioners building neural network models

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.