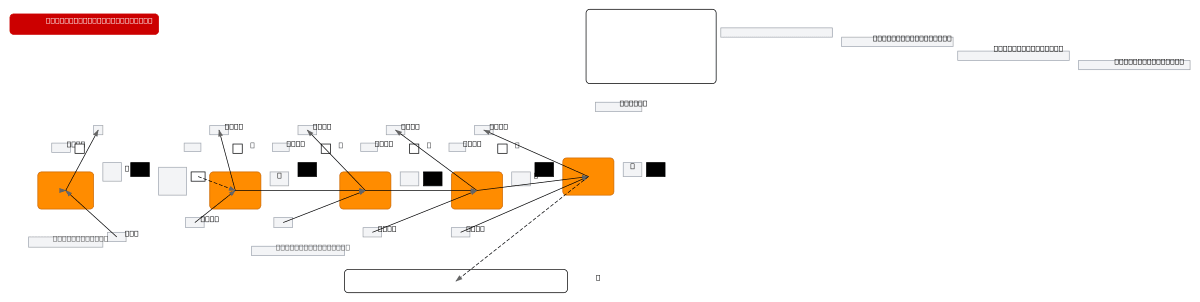

Simple Recurrent Neuron - Architecture Diagram

About This Architecture

Simple recurrent neuron architecture demonstrating temporal dependency modeling through weight matrices and hidden state feedback. Input sequence X(t) flows through stacked neuron cells with shared weights W_x and recurrent weight w_y, where each neuron's output feeds into the next timestep's computation. This compact-to-unrolled visualization clarifies how RNNs maintain memory across time steps via the recurrence relation y(t) = σ(W_x^T·X(t) + w_y·y(t-1) + b). Understanding this foundational pattern is essential for building sequence models, time-series forecasting, and natural language processing systems. Fork this diagram on Diagrams.so to customize neuron counts, activation functions, or extend it with LSTM/GRU variants for your documentation or research.

People also ask

How does a simple recurrent neural network maintain memory across time steps?

A simple RNN uses a recurrent weight w_y to feed the previous timestep's output y(t-1) back into the current neuron computation, combined with input W_x and bias b. This diagram shows both the compact form and unrolled-in-time visualization, illustrating how the same neuron cell processes sequential inputs X(t) through X(t4) while maintaining temporal dependencies via the recurrence relation y(t)

- Domain:

- Ml Pipeline

- Audience:

- Machine learning engineers and deep learning practitioners implementing recurrent neural networks

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.