SageMaker Canvas Iris ML Pipeline on AWS

About This Architecture

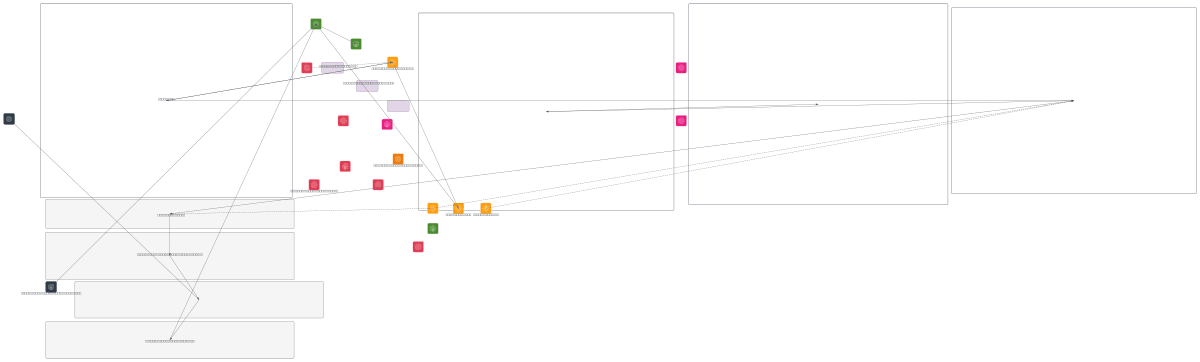

SageMaker Canvas Iris ML Pipeline demonstrates an end-to-end low-code machine learning workflow on AWS, from data ingestion through real-time inference. The pipeline ingests the Iris dataset into S3, catalogs it via AWS Glue and Lake Formation, then uses SageMaker Canvas for automated data preparation and built-in model training without writing code. Trained models are registered and deployed to SageMaker Real-Time Endpoints, with predictions served through API Gateway and Lambda, while CloudWatch monitors both training metrics and endpoint performance. This architecture eliminates the need for custom ML code, enabling rapid model development and deployment for classification tasks. Fork this diagram on Diagrams.so to customize data sources, add batch inference, or integrate additional AWS services like SageMaker Feature Store. The modular design separates ingestion, training, and inference layers, making it easy to scale or swap components as requirements evolve.

People also ask

How do I build an end-to-end machine learning pipeline using SageMaker Canvas on AWS without writing code?

This diagram shows a complete SageMaker Canvas pipeline: ingest Iris data to S3, catalog it with Glue and Lake Formation, use Canvas for auto data prep and built-in model training, register the model, deploy to a real-time endpoint, and serve predictions via API Gateway and Lambda. CloudWatch monitors both training and inference performance throughout.

- Domain:

- Ml Pipeline

- Audience:

- ML engineers and data scientists building low-code ML pipelines with SageMaker Canvas

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.