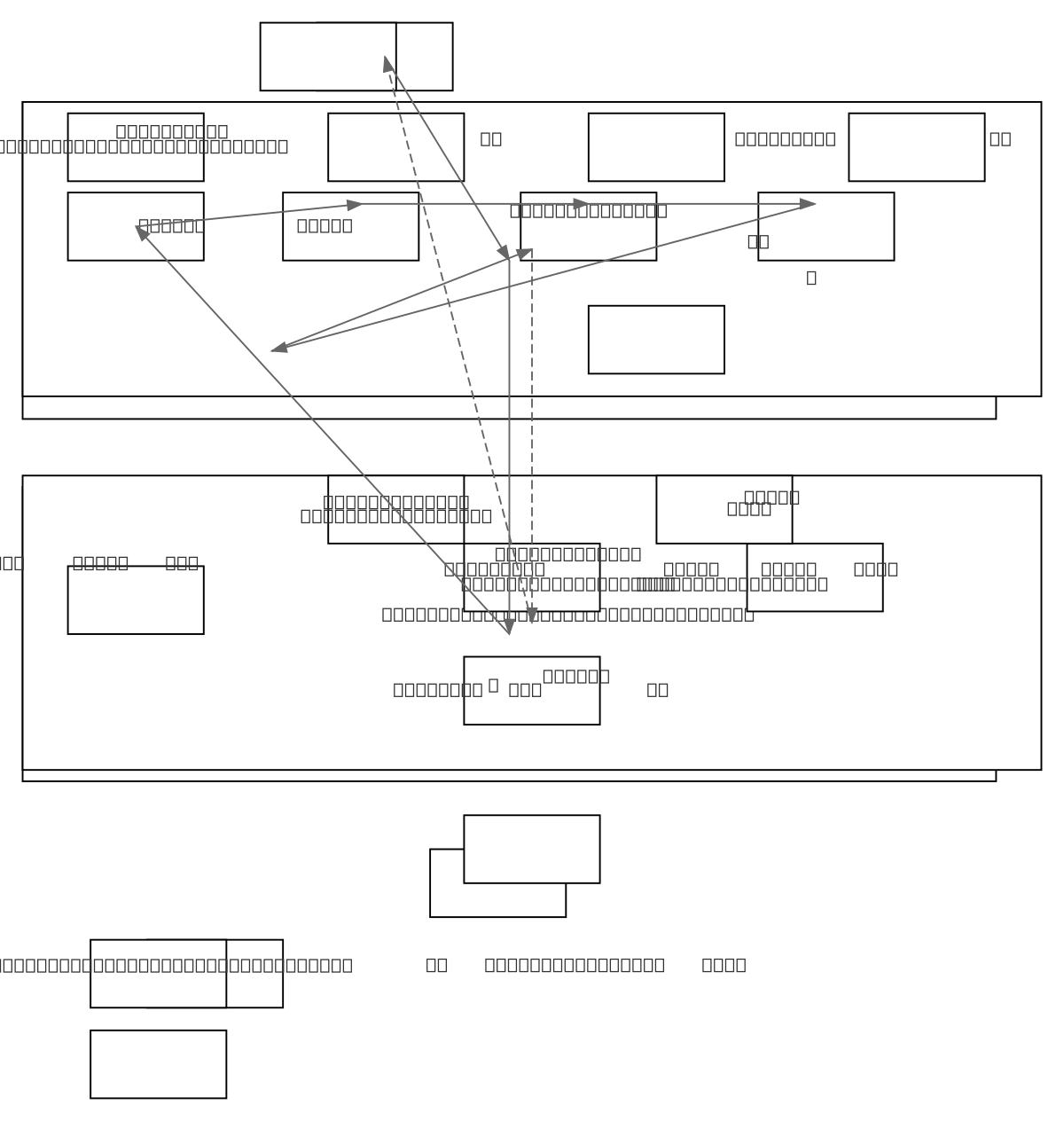

RBF Kernel Regression Algorithm Steps

About This Architecture

RBF Kernel Regression is a non-parametric supervised learning algorithm that uses radial basis function kernels to perform non-linear regression in high-dimensional spaces. The workflow begins with training data, computes a Gram matrix of pairwise RBF kernel similarities, adds ridge regularization, and solves a linear system to obtain dual coefficients that weight each training point. Predictions are made as weighted sums of kernel similarities between the query point and all training points, with hyperparameters sigma (bandwidth) and lambda (regularization) tuned via cross-validation. This approach leverages the representer theorem and reproducing kernel Hilbert space theory to learn flexible non-linear decision boundaries while controlling overfitting. Fork this diagram on Diagrams.so to customize kernel parameters, add alternative kernels, or integrate into your ML pipeline documentation.

People also ask

What are the steps in RBF kernel regression and how do you compute predictions using kernel methods?

RBF kernel regression computes a Gram matrix of pairwise RBF kernel similarities between training points, adds ridge regularization (lambda*I), solves the linear system (K + lambda*I)*alpha = y for dual coefficients, and predicts at new points as weighted sums of kernel similarities. Hyperparameters sigma (bandwidth) and lambda are tuned via cross-validation to balance model flexibility and genera

- Domain:

- Ml Pipeline

- Audience:

- Machine learning engineers implementing kernel methods and non-linear regression models

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.