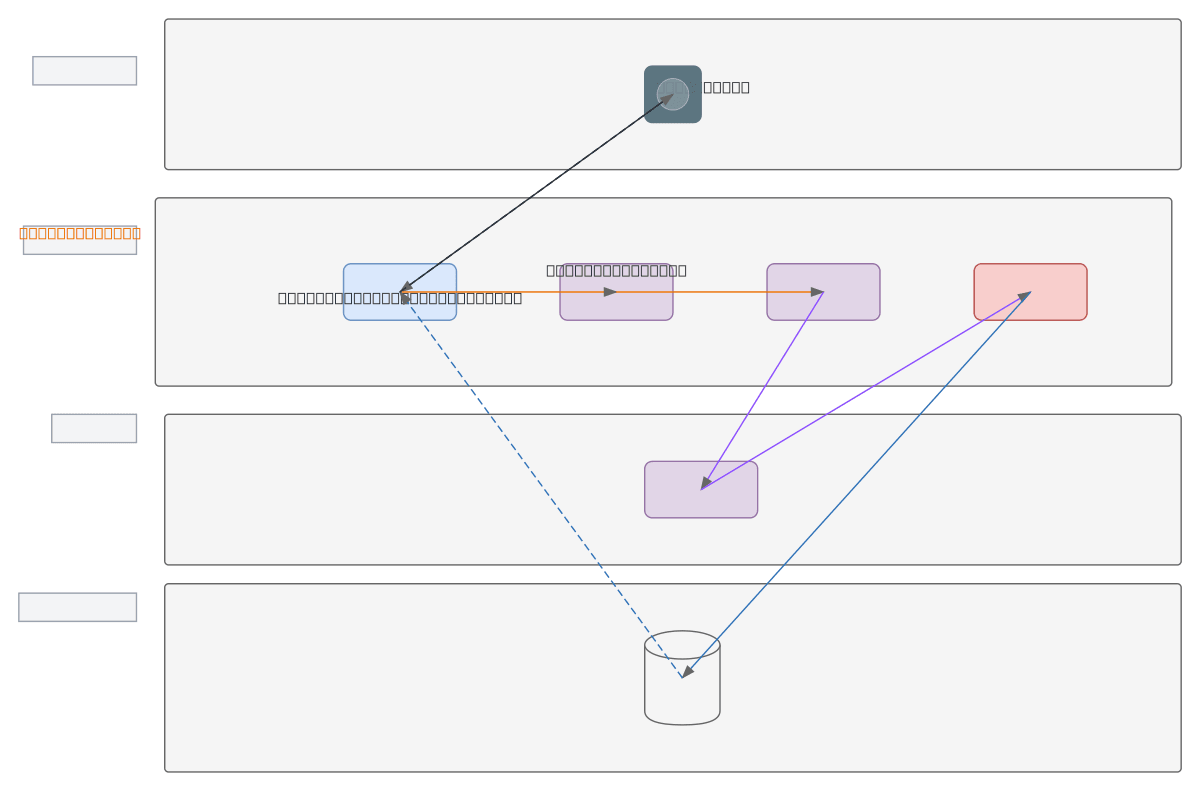

NL-to-SQL AI Query Conversion System

About This Architecture

Natural language-to-SQL conversion system using a local LLM pipeline that transforms user queries into executable database commands. The architecture flows from a user interface accepting natural language input through a backend server that orchestrates schema analysis, prompt generation, and SQL validation before executing queries against MySQL or PostgreSQL. This offline-first design using Ollama ensures data privacy, reduces latency, and eliminates cloud API dependencies while maintaining full control over the LLM inference process. Fork this diagram on Diagrams.so to customize the schema analyzer, adjust prompt templates, or integrate alternative LLM providers. The modular pipeline design allows teams to swap components—such as replacing Ollama with another local model or adding additional validation layers—without restructuring the core architecture.

People also ask

How do you build a natural language-to-SQL system using a local LLM like Ollama without cloud API dependencies?

This diagram shows a complete pipeline: user natural language input flows to a backend server, which analyzes the database schema, generates an optimized prompt, sends it to a local Ollama LLM for inference, validates the resulting SQL, and executes it against MySQL or PostgreSQL. This offline-first approach ensures data privacy and reduces latency.

- Domain:

- Software Architecture

- Audience:

- Full-stack engineers building AI-powered database query systems

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.