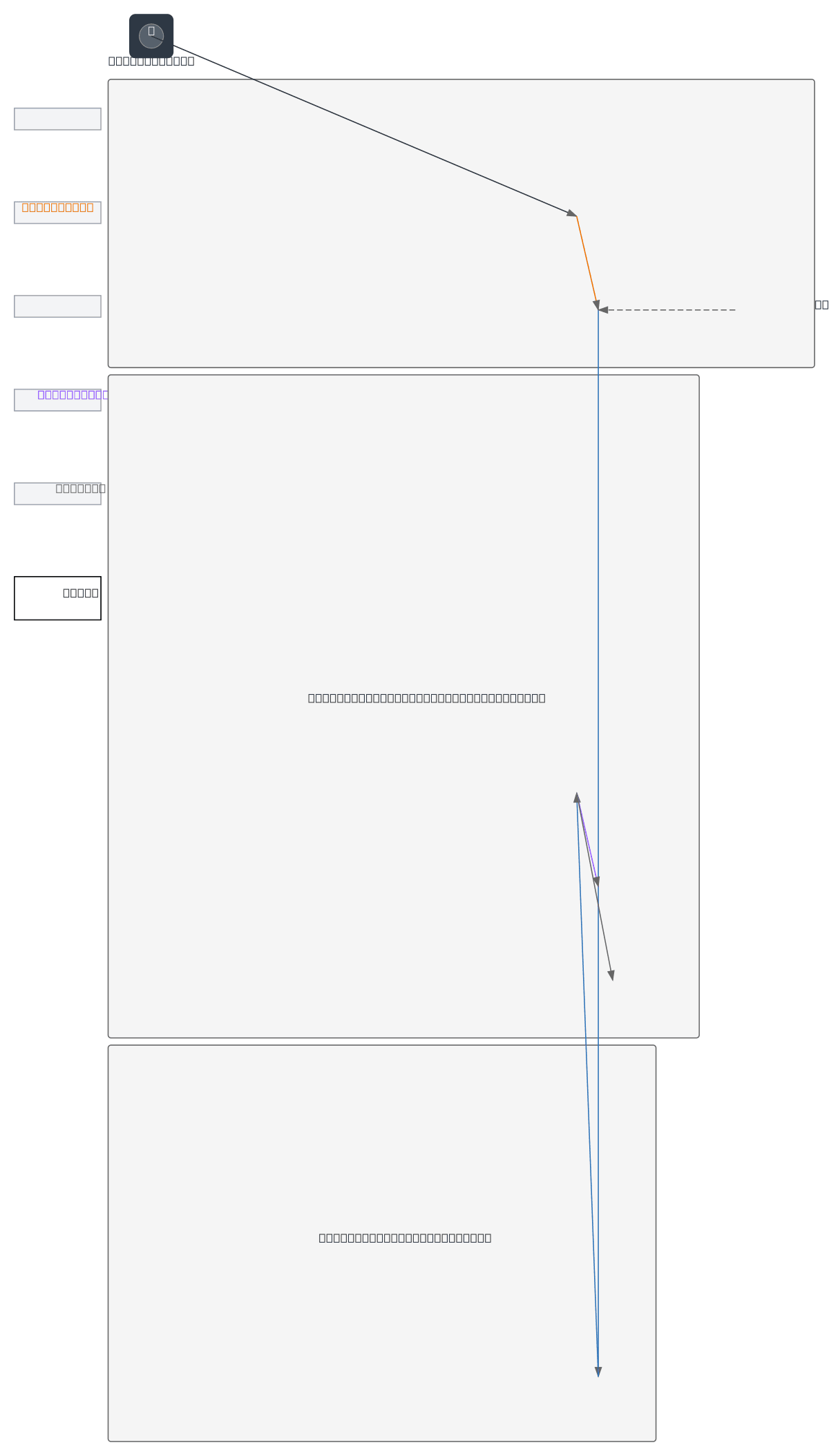

Hand Gesture Slide Controller Architecture

About This Architecture

Hand gesture slide controller architecture uses an MPU6050 accelerometer sensor to detect motion and transmit commands wirelessly via ESP32 microcontroller. Sensor data flows from the hand gesture through MPU6050 to the ESP32, which processes signals and broadcasts them over Wi-Fi to a laptop browser for real-time slide navigation. This architecture demonstrates a practical IoT pattern for contactless presentation control, eliminating the need for physical remotes while maintaining low latency and reliability. Fork this diagram on Diagrams.so to customize sensor calibration, add gesture recognition logic, or integrate alternative wireless protocols like Bluetooth or Zigbee.

People also ask

How do I build a hand gesture-controlled presentation system using an MPU6050 sensor and ESP32?

This diagram shows a complete IoT architecture where an MPU6050 accelerometer detects hand gestures and sends data to an ESP32 microcontroller for processing. The ESP32 transmits gesture commands over Wi-Fi to a laptop browser, enabling real-time slide navigation without physical contact.

- Domain:

- Iot

- Audience:

- IoT engineers and embedded systems developers building gesture-controlled applications

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.