Hand Gesture Slide and Mouse Controller - ESP32

About This Architecture

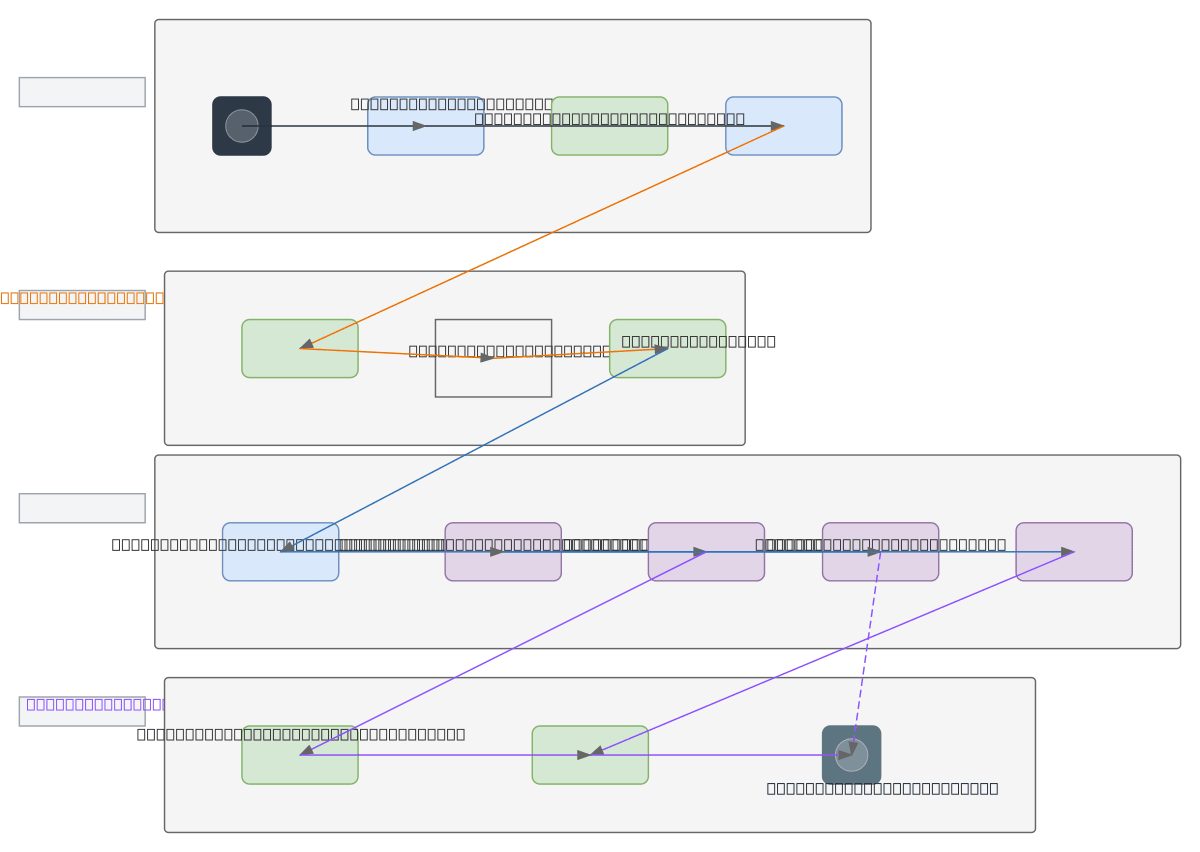

Hand gesture slide and mouse controller using ESP32 microcontroller with MPU6050 inertial measurement sensor mounted on a wrist-worn glove. Sensor data streams via WiFi to a local WebSocket server running Node.js or Python Flask, where a gesture parser interprets motion commands and routes them to slide control and mouse pointer logic. The architecture bridges gesture input to PowerPoint via COM/API integration and simultaneous web UI display, enabling wireless presentation control from hand movements alone. Fork this diagram on Diagrams.so to customize sensor types, add alternative gesture recognition models, or adapt the WebSocket protocol for your embedded platform. This pattern demonstrates edge computing principles by processing raw IMU data locally before transmission, reducing latency and network bandwidth for real-time interaction.

People also ask

How do I build a wireless hand gesture controller for PowerPoint presentations using ESP32 and motion sensors?

This diagram shows a complete IoT pipeline: a wrist-mounted glove with MPU6050 sensor connects to an ESP32 microcontroller, which transmits gesture data via WiFi to a local WebSocket server. The server runs a gesture parser that interprets motion commands and routes them to PowerPoint COM/API bridge and mouse controller logic, enabling gesture-based slide navigation and pointer control.

- Domain:

- Iot Embedded

- Audience:

- embedded systems engineers and IoT developers building gesture-controlled applications

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.