Hand Gesture Slide and Mouse Control System

About This Architecture

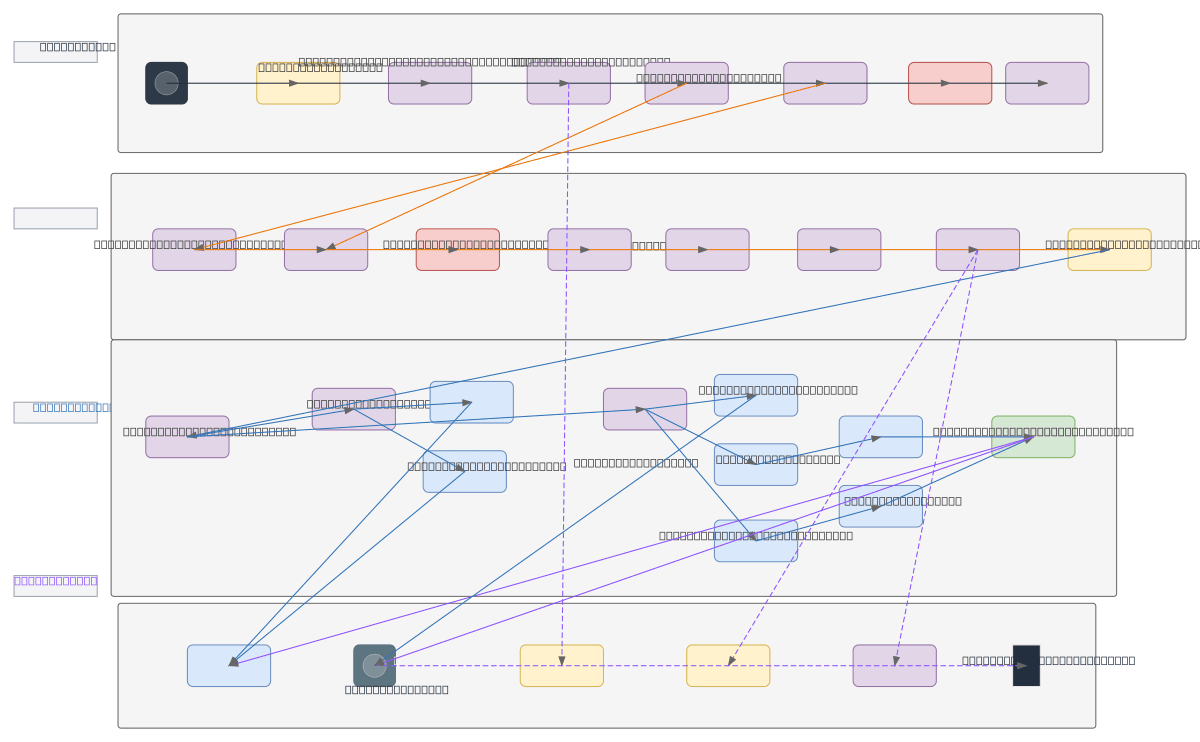

Real-time hand gesture recognition system using MediaPipe for slide control and mouse input without physical devices. Video frames from a webcam feed through preprocessing, hand landmark extraction via MediaPipe's 21-point model, and gesture classification using CNN/LSTM to detect swipes, pinches, and finger states. The Gesture Recognition Engine outputs confidence-scored commands to a Control Dispatcher that maps gestures to PowerPoint/Keynote slide navigation and OS-level mouse control via PyAutoGUI. This architecture enables touchless presentation control and accessibility-focused input, reducing reliance on hardware peripherals while maintaining sub-100ms latency through temporal smoothing and confidence thresholding. Fork and customize this diagram on Diagrams.so to adapt gesture mappings, add new classifiers, or integrate alternative hand tracking models.

People also ask

How do you build a real-time hand gesture recognition system for touchless slide and mouse control?

This diagram shows a complete pipeline: webcam input → MediaPipe 21-landmark hand tracking → CNN/LSTM gesture classifier → confidence-scored command dispatcher → PyAutoGUI OS input injection. Temporal smoothing and gesture confidence thresholding ensure reliable, low-latency control for presentations and accessibility applications.

- Domain:

- Ml Pipeline

- Audience:

- Computer vision engineers building real-time hand gesture recognition systems

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.