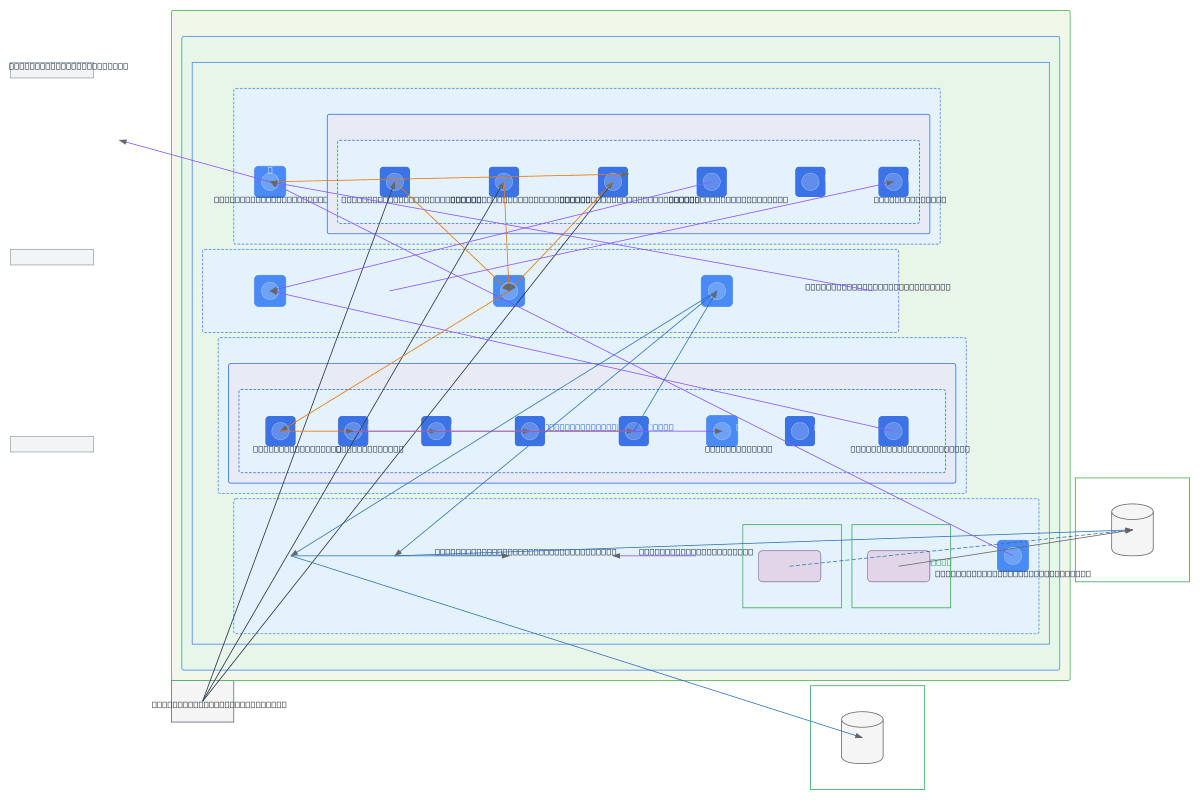

GCP End-to-End Data Ingestion Pipeline

About This Architecture

End-to-end GCP data ingestion pipeline orchestrating REST API collection, EDI file processing, and event-driven loads across three specialized GKE clusters in a VPC with isolated subnets. Cloud Composer triggers API Fetch Jobs in the Ingestion cluster, which write raw data to GCS staging; EDI Processing cluster handles malware scanning, DLP checks, decryption, and format conversion before publishing to Pub/Sub topics. Snowpipe consumers in Snowflake DEV and PROD ingest cleaned data, while Secret Manager and Workload Identity enforce least-privilege access across all stages. Fork this diagram to customize subnet ranges, add Cloud Dataflow transforms, or integrate additional data sources and destinations.

People also ask

How do you build a secure, scalable data ingestion pipeline on GCP that handles REST APIs, EDI files, malware scanning, and loads to Snowflake?

This diagram shows a three-stage GCP pipeline: Stage 1 uses Cloud Composer and GKE to fetch REST API data into GCS staging; Stage 2 processes EDI files with malware, DLP, decryption, and format handling in a separate GKE cluster; Stage 3 publishes cleaned data to Pub/Sub topics for Snowpipe consumption. Workload Identity and Secret Manager enforce least-privilege access throughout.

- Domain:

- Data Engineering

- Audience:

- Data engineers building multi-stage ingestion pipelines on GCP with Kubernetes and Airflow

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.