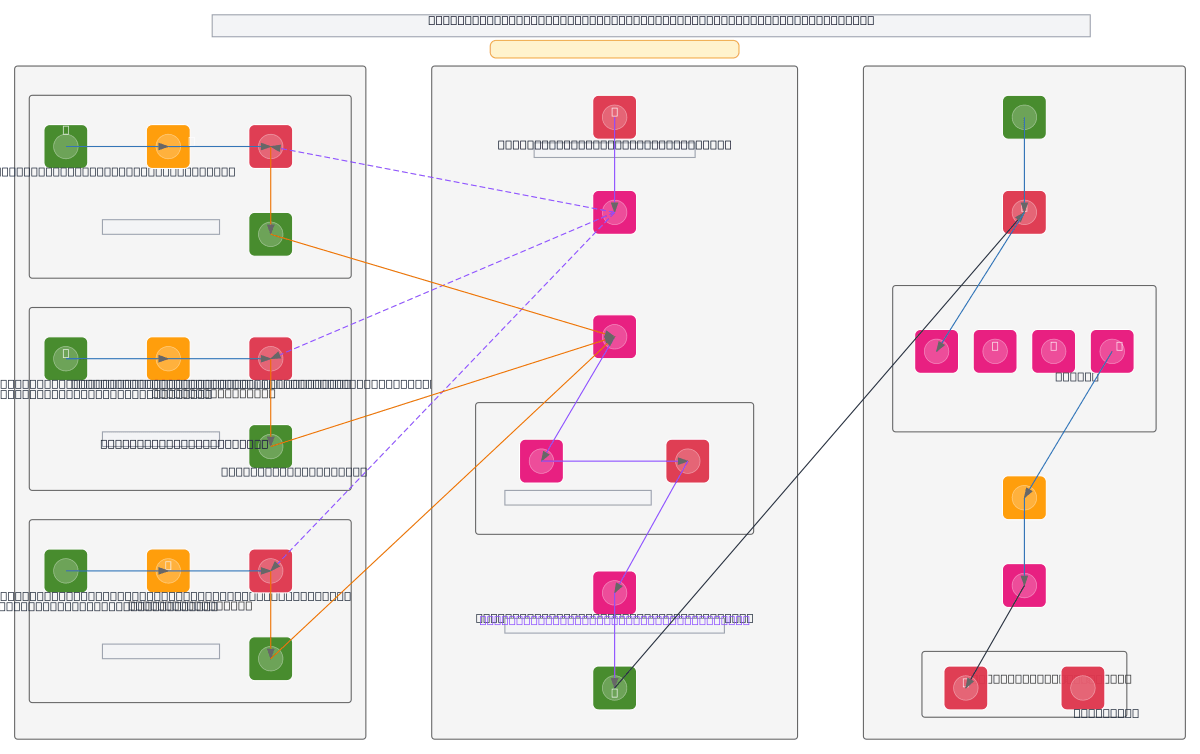

Federated Learning Pneumonia Detection System

About This Architecture

Federated Learning Pneumonia Detection System uses ResNet18 models trained locally across three hospital clients with 3,200 to 3,500 chest X-ray images each, ensuring raw medical data never leaves client premises. Local preprocessing (224x224 resize, augmentation, normalization) and training occur independently at each hospital, with only model weights transmitted to the AWS Federated Learning Server for adaptive weighted aggregation. The server performs 10 communication rounds of broadcast-train-aggregate cycles, weighting client contributions by dataset size (w1=0.34, w2=0.30, w3=0.37) to produce a global model stored in S3. Final evaluation uses SageMaker Batch Transform on held-out test data, generating accuracy, precision, recall, F1-score, and confusion matrices logged via CloudWatch for pneumonia classification. Fork this diagram to customize hospital counts, dataset sizes, communication rounds, or integrate alternative aggregation strategies like FedProx or differential privacy mechanisms.

People also ask

How can hospitals collaboratively train a pneumonia detection model without sharing raw patient X-ray data?

This federated learning architecture trains ResNet18 models locally at each hospital on their own chest X-ray datasets, then sends only model weights to an AWS server for adaptive weighted aggregation across 10 communication rounds. Raw medical images never leave client premises, preserving privacy while building a globally improved pneumonia classifier.

- Domain:

- Ml Pipeline

- Audience:

- ML engineers and healthcare data scientists implementing federated learning for medical imaging

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.