Battleship AWS Workshop - From Cadet to Admiral

About This Architecture

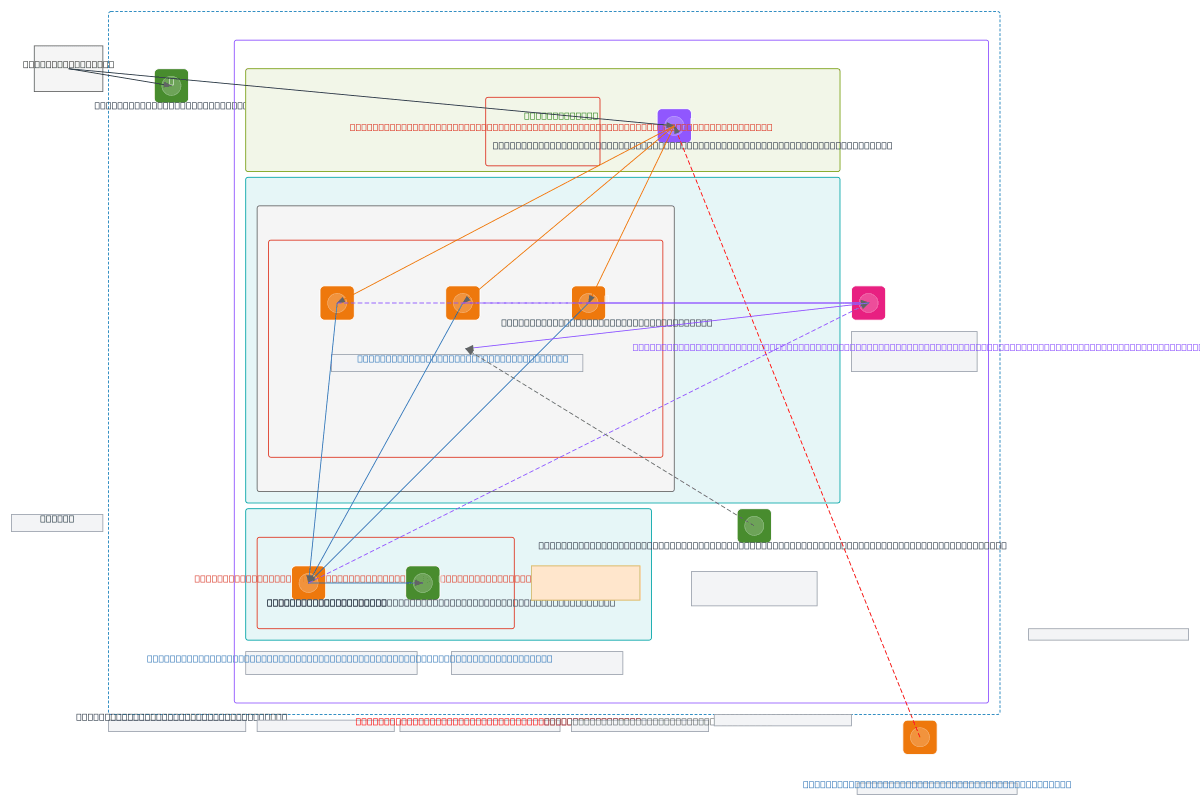

Three-tier web application architecture demonstrating AWS auto-scaling under adversarial load, with stateless EC2 instances in an Auto Scaling Group fronted by an Application Load Balancer. Traffic flows from the internet through the ALB into a public subnet, then to backend Python instances in a private subnet, with all state persisted to an encrypted EBS volume and database server in a separate data subnet. CloudWatch monitors CPU utilization in real time, triggering scale-out when demand exceeds 70%, while security groups enforce least-privilege access between tiers. This workshop-grade design illustrates horizontal scaling best practices, the critical role of AMIs in rapid instance provisioning, and the single point of failure risk of EC2-based databases—highlighting why production deployments should use RDS Multi-AZ or managed clusters. Fork this diagram to customize subnets, scaling policies, or database tier, then download as .drawio or .svg to embed in runbooks and architecture documentation.

People also ask

How do I design a scalable AWS web application that automatically adds EC2 instances when CPU exceeds 70%?

This diagram shows a three-tier AWS architecture where an Application Load Balancer distributes traffic to stateless Python EC2 instances in an Auto Scaling Group (min 1, desired 2, max 4). CloudWatch monitors CPU utilization and triggers scale-out when demand spikes; all application state persists in an encrypted EBS volume, enabling horizontal scaling without manual instance configuration.

- Domain:

- Cloud Aws

- Audience:

- AWS solutions architects designing resilient web applications with auto-scaling

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.