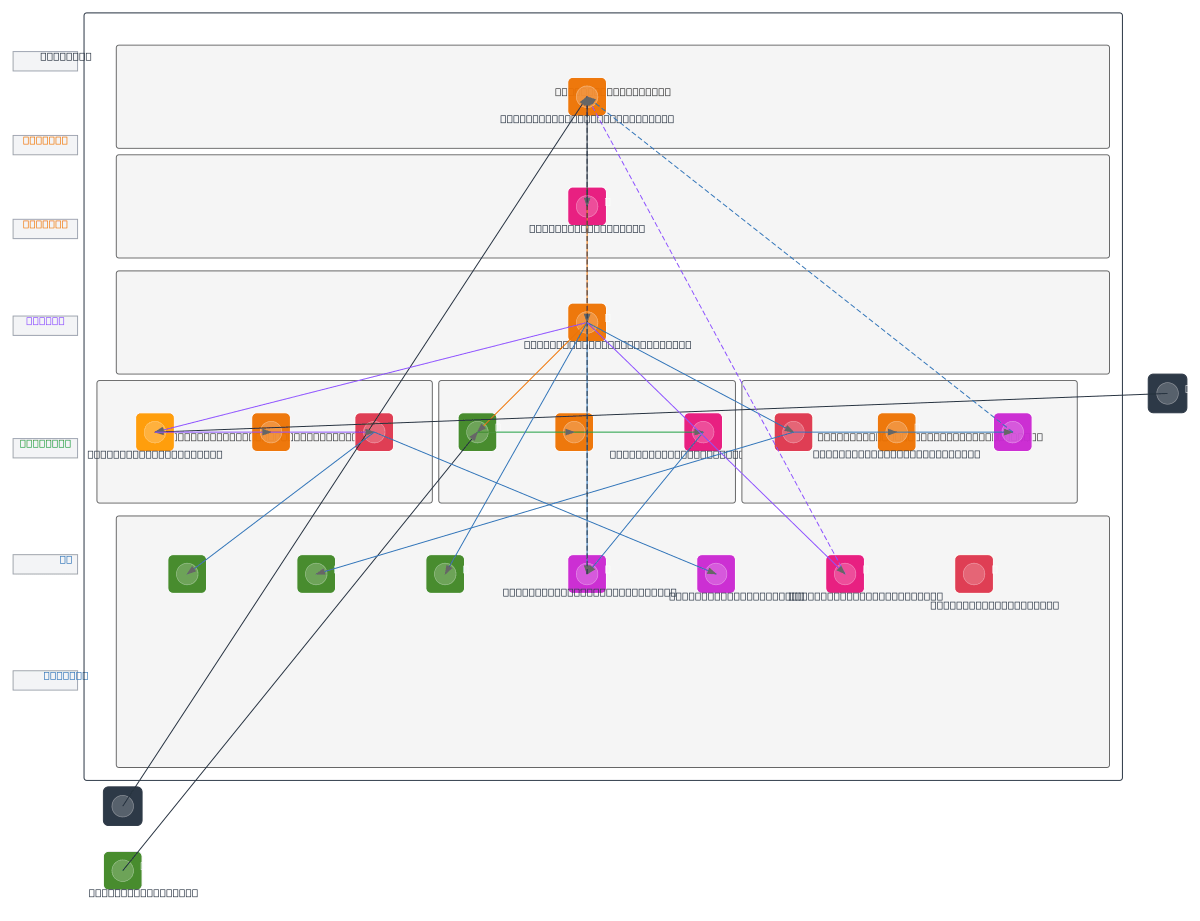

AWS Smart Automation System Architecture

About This Architecture

AWS Smart Automation System Architecture integrates computer vision, robotic control, and machine learning inference into a fully serverless pipeline on AWS. Users interact via AWS Amplify web dashboard, which routes requests through API Gateway to Lambda functions that orchestrate Kinesis Video Streams for camera input, AWS IoT Core for Dobot arm commands, and Amazon SageMaker for predictive analytics. Computer vision frames flow from the camera through Kinesis to Lambda frame processors, then to Amazon Rekognition for object detection, with results stored in S3 and DynamoDB for attendance tracking and audit logs. This architecture demonstrates event-driven automation at scale, combining real-time video processing, ML inference, and IoT device control with full observability via CloudWatch and centralized access control through IAM. Fork this diagram on Diagrams.so to customize for your robotics, manufacturing, or surveillance use case, or download as .drawio to integrate into your architecture documentation.

People also ask

How do I build a serverless AWS architecture for real-time computer vision and robotic arm control?

This diagram shows a complete event-driven automation system where camera feeds stream through Kinesis Video Streams to Lambda frame processors, which invoke Amazon Rekognition for object detection, while AWS IoT Core manages Dobot robotic arm commands triggered by Lambda control functions. SageMaker handles predictive analytics, with all results logged to DynamoDB and CloudWatch for monitoring an

- Domain:

- Cloud Aws

- Audience:

- AWS solutions architects designing intelligent automation systems with computer vision and robotics

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.