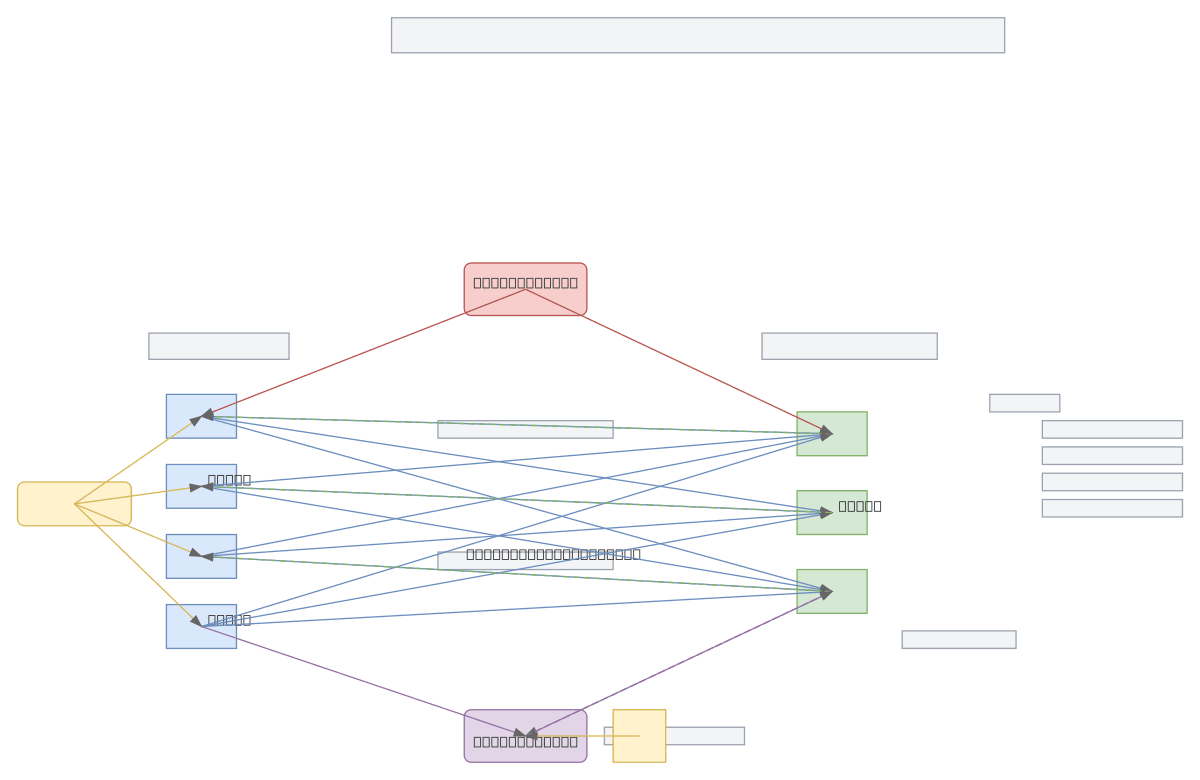

ART Neural Network Architecture

About This Architecture

Adaptive Resonance Theory (ART) neural network with bidirectional F1 input and F2 recognition layers connected via bottom-up and top-down weighted pathways. External input feeds the F1 layer while gain control and reset mechanisms regulate network dynamics based on vigilance parameter rho. The architecture demonstrates unsupervised learning through resonance matching between input patterns and learned prototypes, enabling stable category learning without catastrophic forgetting. Fork this diagram on Diagrams.so to customize layer sizes, weight configurations, or add additional control modules for your ART implementation. The reset module's mismatch detection and vigilance threshold control are critical for balancing plasticity and stability in pattern recognition tasks.

People also ask

How does the Adaptive Resonance Theory neural network architecture work with F1 and F2 layers?

ART uses bidirectional connections between F1 (input) and F2 (recognition) layers with bottom-up and top-down weights to match input patterns against learned prototypes. The vigilance parameter rho controls the reset module, which triggers mismatch detection when input-prototype similarity falls below threshold, enabling the network to learn new categories while preserving existing knowledge.

- Domain:

- Ml Pipeline

- Audience:

- machine learning researchers and neural network architects studying adaptive resonance theory

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.