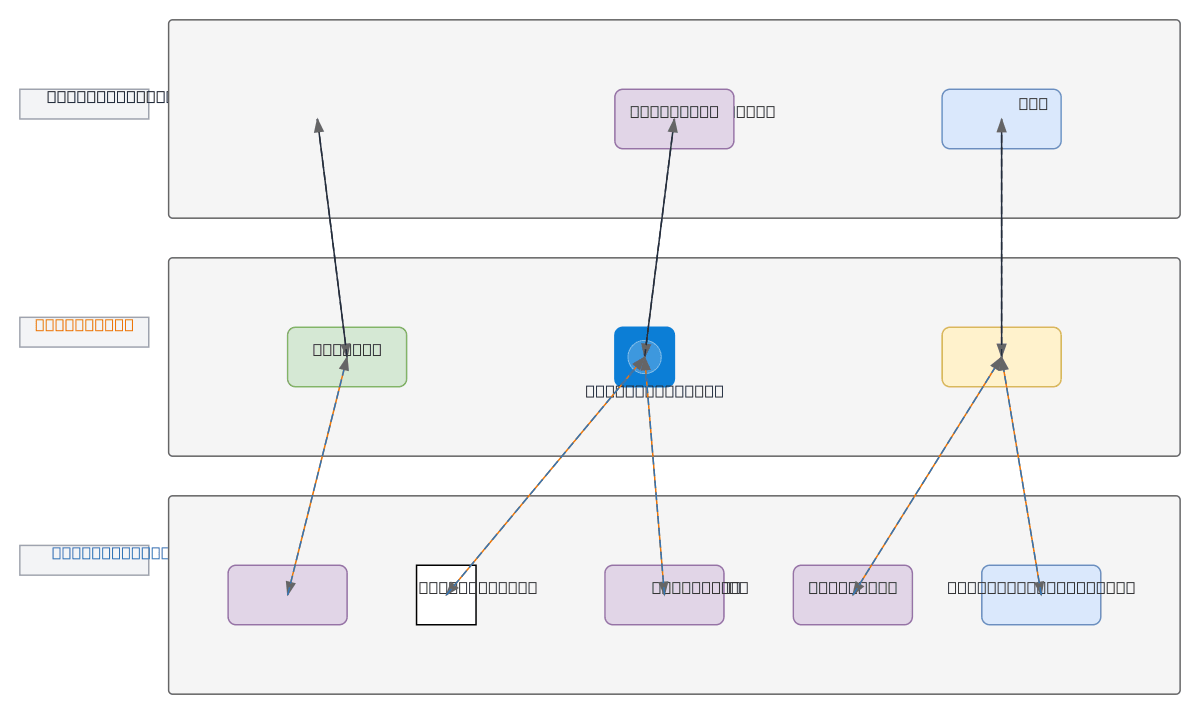

AI Coding Harness with Gateway and LLM Providers

About This Architecture

Multi-LLM gateway architecture with Azure OpenAI, OpenAI, Anthropic, Google AI, and local Ollama providers unified through LiteLLM/PortKey routing and budget control. VS Code, CLI, and AI Agents connect to a centralized AI Gateway that intelligently routes requests, enforces spending limits, and logs all interactions across heterogeneous LLM endpoints. This pattern decouples application code from provider-specific APIs, enabling cost optimization, failover resilience, and vendor flexibility without rewriting integrations. Fork and customize this diagram on Diagrams.so to match your multi-cloud LLM strategy, then embed it in architecture docs or runbooks. Budget Control and Logging components ensure governance and observability critical for production AI workloads.

People also ask

How do I build a multi-LLM gateway on Azure that routes requests across OpenAI, Azure OpenAI, Anthropic, and local Ollama with cost controls?

This diagram shows a unified AI Gateway pattern using LiteLLM/PortKey to intelligently route requests from VS Code, CLI, and AI Agents across multiple LLM providers while enforcing budget limits and centralizing logs. Budget Control and Logging components ensure cost governance and observability across heterogeneous endpoints.

- Domain:

- Cloud Azure

- Audience:

- Azure cloud architects and AI/ML engineers implementing multi-LLM gateway patterns

Generated by Diagrams.so — AI architecture diagram generator with native Draw.io output. Fork this diagram, remix it, or download as .drawio, PNG, or SVG.